Why I Left ChatGPT (And What OpenClaw Taught Me About Control)

I Was Trapped and Didn't Know It

I work at a startup called n8n working DevRel. A big part of my job is making videos. A few months back, I took a deep dive into my process to see where I could begin automating such a creative process.

For me, a very large part of that was using AI to help me put together scripts for the technical videos I make.

Because my first AI experience was with OpenAI, that was the lens through which I wrote.

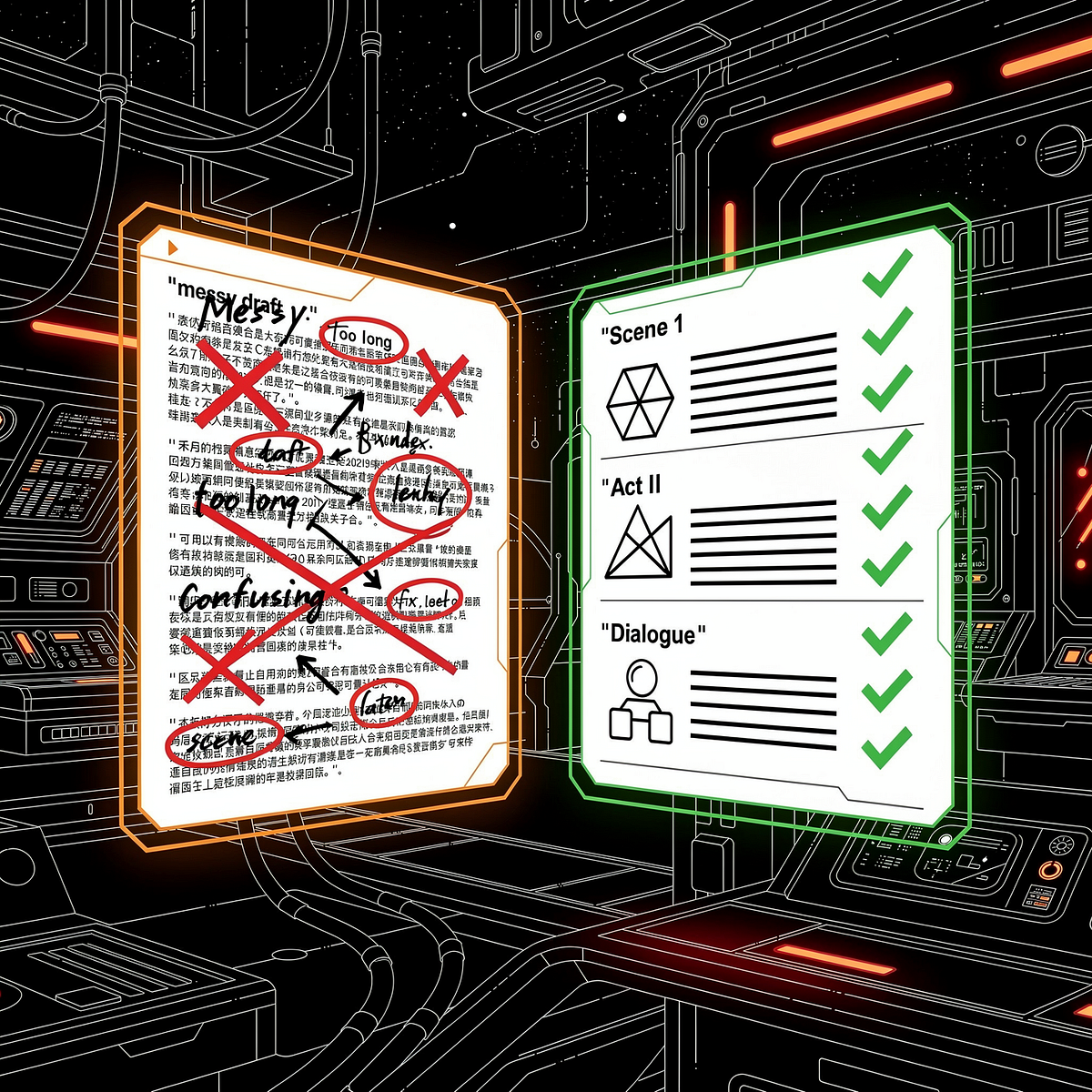

I used ChatGPT for years - it started as my intro to AI, and eventually started doing the editing on my scripts, holding my context, and generally I felt comfortable and didn't see a reason to leave. It wasn't writing the scripts, only tuning what I drafted, so why did it matter?

The scripts were a bit fluffy around the edges, sure. I didn't notice however, because I only used ChatGPT for scripts. I used other models for automation, so I had no basis for comparison in a creative domain.

When I switched to Cursor, the quality difference was immediate. Tighter writing, better structure, fewer filler phrases. Like swapping a well-meaning intern for a senior editor. Granted, my videos are very technical, so it almost makes sense that a more technical AI can help edit more technical videos.

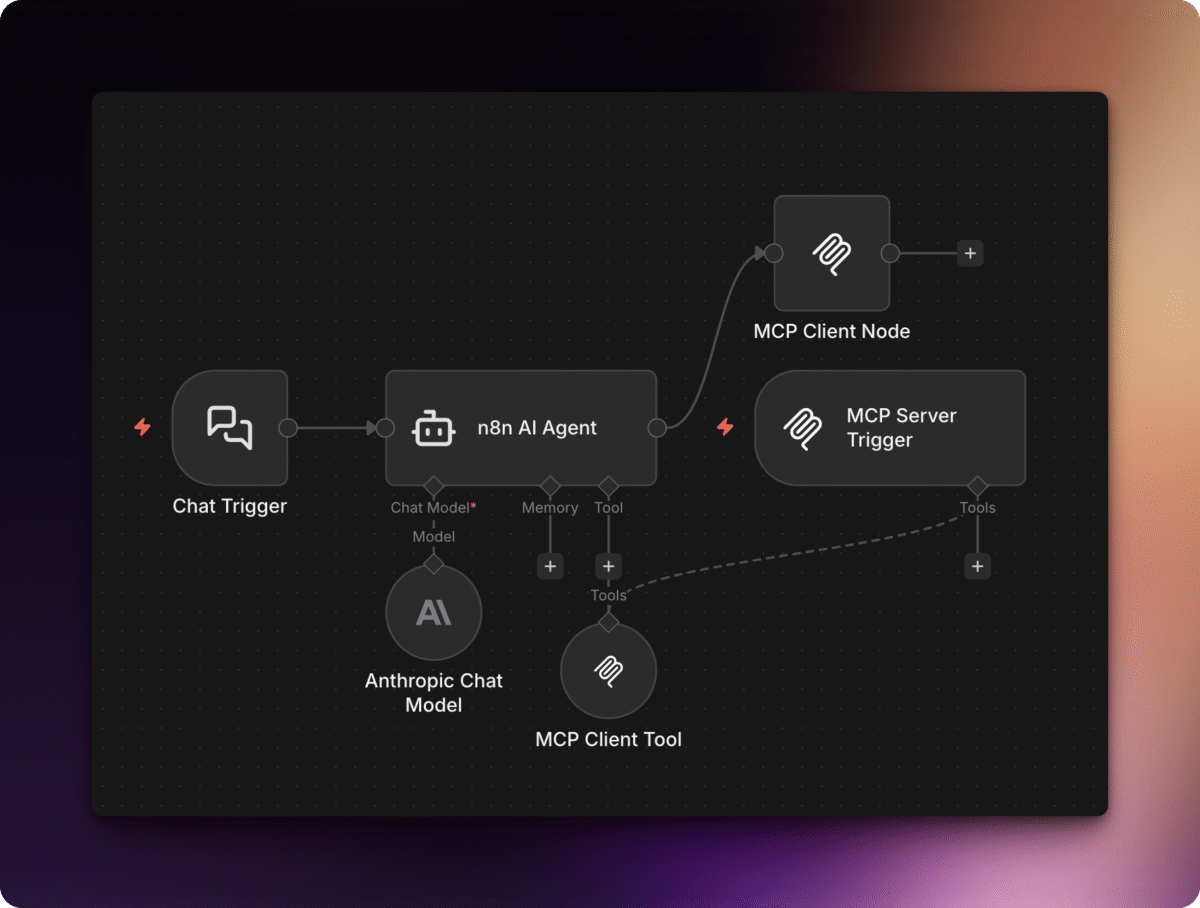

The migration was painless - not because ChatGPT made it easy, but because I'd already built my tools as MCP servers running through n8n. When Claude came around, I already knew that dance that was coming, and once again the migration was painless. Then came Openclaw, and I knew what to do.

If you've never heard of MCP, or want a deeper dive, I wrote a companion post on my business site that explains how MCP works in n8n.

My calendar access, note-taking workflows, event automation, none of it lived inside ChatGPT. Or Cursor. Or Claude. Or Openclaw. Or whatever else comes along. The models and UI has changed. The plumbing stayed the same, and I'm not scared of change, because my handy toolbox, n8n, goes with me wherever I go.

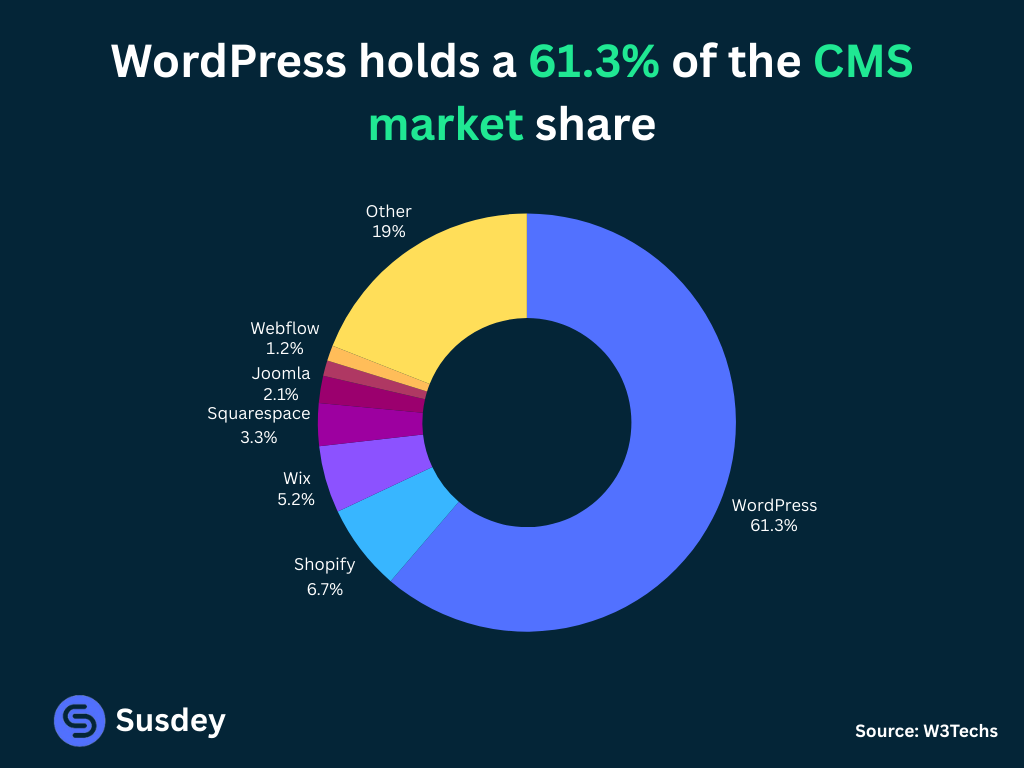

The WordPress Lesson

I had control. Control over my expenses.

Export your site. Move hosts. Overnight if you have to. That single capability supported an entire ecosystem. Hosts and eventually plugin makers competed on quality instead of lock-in. Clients trusted the investment because they'd never be held hostage.

We see that in n8n as well. Workflows are simply JSON files. Want to migrate? Take your files with you.

The Portability Principle

The ability to walk away is what keeps vendors honest and keeps power with the user.

- Swap the model, keep the plumbing

- Proprietary connectors = lock-in

- Standard protocols = freedom

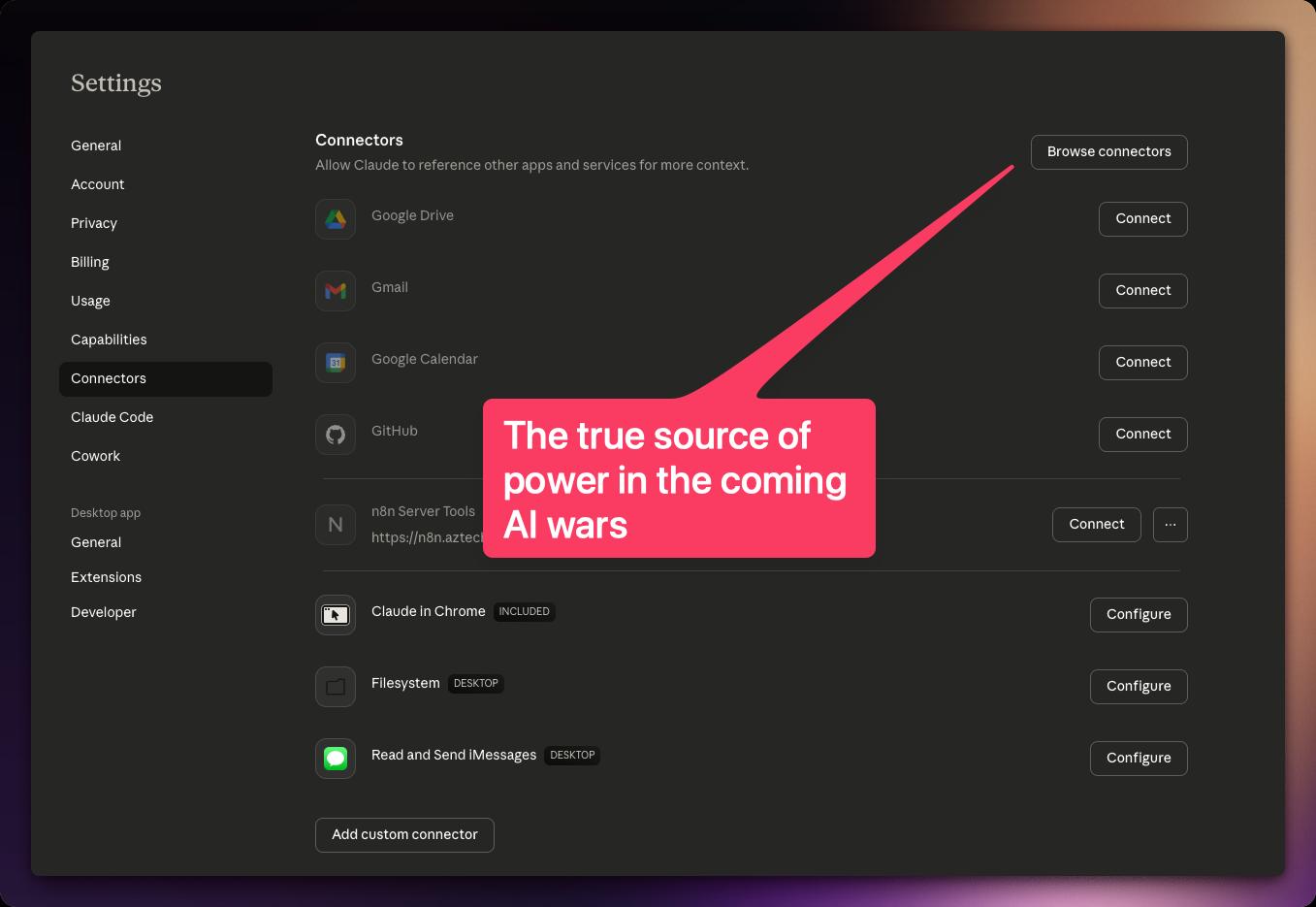

AI tooling is at exactly the same inflection point right now. If your tools are MCP servers you own, your AI is replaceable. If your tools are ChatGPT custom GPTs, Claude Projects, or Gemini Extensions, you're the one locked in.

Because it's not the text that an AI produces that gives value, it's the utilization of tools that makes AI agents powerful. And you're the one who decides how that power is given out, so part of that responsibility is yours.

Think of it this way: n8n is to AI what JSON is to web development. JSON didn't replace anything, it became the universal format that every tool agrees on. n8n does the same thing for AI workflows. It's the common layer that lets any AI talk to any tool, without either side needing to know about the other.

You can have it easy, or you can have it portable and private. But that tradeoff is yours to make.

MCP - The Protocol That Makes This Possible

MCP - Model Context Protocol - is an open standard that lets any AI model call external tools through a single, universal interface. Instead of building custom integrations for every AI provider, you build once and connect anywhere.

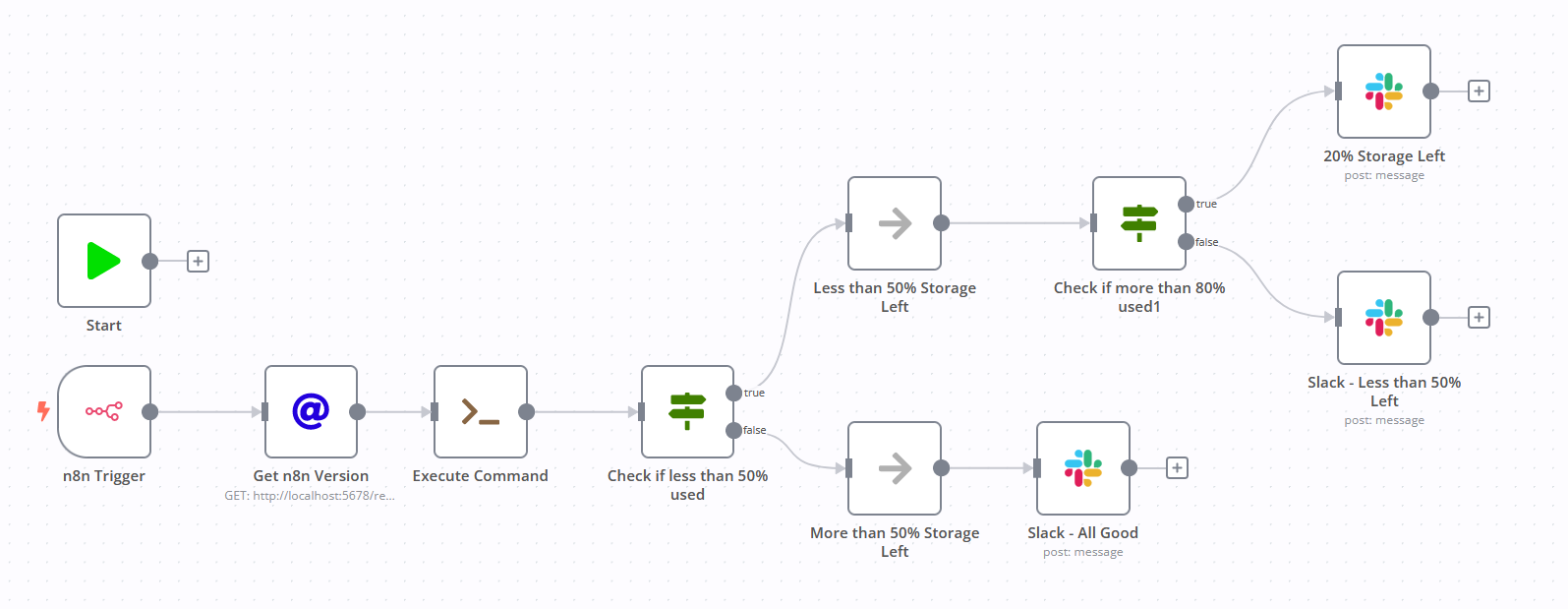

I run all my tools through n8n's MCP implementation. My AI connects to n8n, n8n connects to everything else. Google Calendar, Obsidian, Slack - n8n handles all the OAuth on the backend. The AI never touches those APIs directly.

Without n8n

AI → OAuth to Google → OAuth to Slack → OAuth to Notion

Each connection is provider-specific. Change your AI, rebuild everything.

With n8n

AI → MCP (header auth) → n8n → handles all OAuth

One connection. Swap the AI anytime. Tools don't notice.

n8n actually has four distinct ways to work with MCP, a Trigger, a Node, a Gateway, and a Tool, each serving different purposes. For this post, what matters is the principle: your tools should outlive your AI provider.

What OpenClaw Made Obvious

OpenClaw is an open-source framework that turns an AI model into a persistent assistant, running on your machine, scheduling its own tasks, managing memory across sessions. It feels less like chatting with a model and more like having an employee who happens to be software.

Cursor, Claude Code, and similar tools have introduced persistent memory through markdown files and conversation compaction to reduce hallucinations.

OpenClaw takes this further, it gives the AI the ability to edit its own configuration, set cron schedules to wake itself up, and manage its own memory files. Pair that with MCP tools like a browser, and it can do almost anything you can.

What's interesting is that not all AI agents are the same — some run inside platforms like n8n, others run externally like OpenClaw and connect in. That distinction changes everything about how you architect your tools.

OpenClaw is model-agnostic. The framework doesn't care if it's running Claude, GPT, Gemini, or local. The tools, memory, scheduling - all infrastructure you own. The model is just the brain you plug in.

Want to see it in action? Here's a raw video of me integrating MCP tools with Cortana, my OpenClaw assistant:

I change one line of config. My tools stay. My workflows stay. My data stays.

It's Happening On Your Phone Too

Apple's Shortcuts app is basically n8n for your phone. Local model works for small tasks but chokes on large text. The solution is the same layered approach:

📱 Phone

iOS Shortcuts → webhooks → n8n

🖥️ Desktop

OpenClaw → MCP → n8n

🖧 Server

Scheduled workflows → APIs → n8n

n8n is the constant. That's not just because I work there, it's because I have full control over my AI, and my automation. That need for control has protected me in the past. And it's helped me learn a lot.

The Real Promise Isn't AGI (at least, not for me)

This is what I'm looking for:

⚡ Local AI Models

AI should be an appliance like a fridge, not a service like Disney+. If I was running models like Opus 4.5-4.6 at home, I wouldn't be able to tell the difference between that and AGI, especially with all the MCP tools I've given it.

🔒 Full Privacy

Data never leaves your machine. No training on your prompts, no third-party access, no terms of service that change overnight. A local model you can be completely open and honest with, that's not a feature, that's a relationship.

🏠 True Ownership

Your hardware, your workflows, your drives. No vendor can revoke access, change pricing, or sunset a feature you depend on. The infrastructure is yours the same way your plumbing is yours.

I know there is a lot of talk about AGI. And while I think it's an important discussion to be had, it's not the one that gets me excited. Not because I don't think it's possible, but because I'm not entirely convinced we need AGI. We can barely even control the models we have now.

What I think we now need are local models on the level of Opus 4.5-4.6 that we can control, in a home appliance like a fridge.

I know we are still not even close yet (by tech time, not normal time), but that doesn't mean we can't start preparing for that eventual future now.

What You Should Do Today

That last one is worth expanding on. Everyone's worried about losing their job to AI. I get that fear for sure. But so far, what I'm actually seeing, is that the people that treat their AI like 3rd party contractors will be the ones taking all the jobs.

Like a puppeteer, able to think in terms of orchestration, not in terms of one project, one job, who can tell a crowd what to do and how to do it.

But just like I took precautions with new contractors at my web design firm, I take the same precautions with my AI.

OpenClaw doesn't get an API key with full access to all of my calendars. It gets an n8n MCP server with metered access to a single calendar.

Over time, as it proves itself, I elevate its privileges in n8n. And when a better model comes along, I take the skill files from OpenClaw, which describe every workflow and MCP server it had access to, and hand them to the next AI.

New model reads the docs, connects to the same servers, picks up where the last one left off.

Hire fast, scope tight, replace without losing your infrastructure.

Portability is power. 25 years in web hosting. Same lesson.

Dive Deeper

I wrote a full series on my business blog breaking down every piece of this architecture. Start with the overview or jump to what interests you:

📚 How MCP Works in n8n

The complete overview - Access, Trigger, Node, and Tool explained with GIFs and real workflows.

Read the MCP Guide